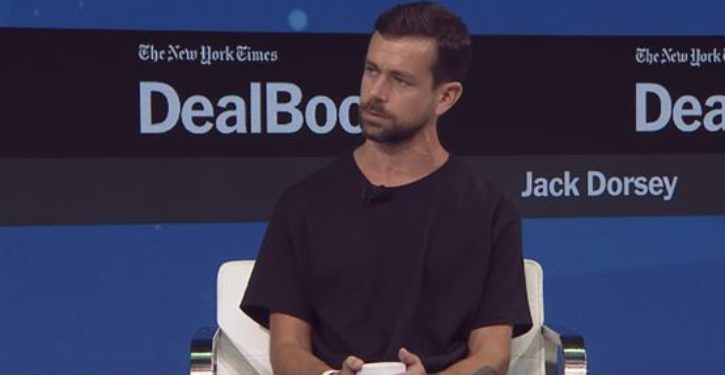

Twitter CEO Jack Dorsey, reeling from widespread accusations that Twitter systematically “shadow-bans” conservative users and their tweets, announced on Monday that Twitter has selected a group of academic partners in two programs to improve the “conversational health” of the Twittersphere. In his words, it’s the first step in a process:

Our first goal is working to measure the “health” of public conversation, and that measurement be open and defined by third parties (not by us).

An update! We’ve selected 2 partners from 230 idea submissions. Our first goal is working to measure the “health” of public conversation, and that measurement be open and defined by third parties (not by us). https://t.co/QjUg5P1RLZ

— jack (@jack) July 30, 2018

This initiative isn’t likely to have a salutary impact on Twitter’s market health, which suffered a notable setback on Friday 27 July.

But clearly @jack has other priorities. The “health of public conversation” is a pretty big mess of vittles to bite off on.

It does seem a bit risky, after losing 20% of your market cap in a single day, to basically put share value in the hands of third parties who are going to define “measurement” criteria (?) for something as abstract and ill-bounded as the “health of public conversation.” Not a classic approach to regaining market strength and wooing back investors. With Twitter actively repudiating any involvement in defining the measurement of whatever the heck it’s doing here, I – if I were invested in Twitter – would be unable to get rid of my remaining shares fast enough.

But set that aside for now. The important thing is that the two projects have been selected, and the academic partners identified.

Axios has the 411 on the two projects. (See the link at Jack Dorsey’s tweet, above, for more.)

1. Echo chambers and uncivil discourse: This project will measure the extent of how much Twitter users interact and acknowledge a variety of viewpoints.

[…]

2. Interactions and decreasing prejudice: This project will study how user behavior on Twitter can (or not) decrease prejudice and discrimination when interacting with users of diverse viewpoints.

Again, these efforts appear especially unlikely to improve the kind of health Twitter needs to remain commercially viable as a social media platform.

But, moving on. You can check out the academic teams at the Axios link as well. One of them is from Syracuse University, and on Monday morning, diligent tweeps found within moments an interesting communication from a Syracuse team member during the Republican National Convention in 2016.

https://twitter.com/patyrossini/status/756329790907490304

The gist:

summarizing tonight: hate hate hate WALL hate hate hate LGBTQ hate hate hate BAN IMMIGRATION hate hate hate LAW&ORDER #RNCinCLE

Ms. Rossini will be working on program number 1, “echo chambers and uncivil discourse.”

That’s certainly informative. But wait; there’s more.

As Axios summarizes it, program #1 will:

-

… work on developing algorithms that can distinguish between incivility and tolerance.

-

Uncivil discourse, which breaks the norms of politeness, can serve important functions in political discourse. Intolerance, on the other hand, is defined as including hate speech, racism, and so on, and goes against democracy, per Twitter and the researcher team.

This somewhat cryptic passage, whose meaning seems a bit dubious on reflection, does mean what it sounds like.

It means that attacking people with invective, curse words, and so forth “can serve important functions in political discourse.”

But saying substantive things that other people don’t want to hear “goes against democracy,” and constitutes intolerance. Intolerance is impermissible. It is “incompatible with, and has the potential to damage, normative values of democratic pluralism, freedom of expression and equality,” to quote Patricia Rossini, from pp. 10-11 of a paper she delivered to the International Communication Association at its conference in Prague in May 2018.

Rossini’s thesis in the paper is basically an elaborate justification for assigning value to pejorative speech and ad hominem assaults (incivility), while creating a category of speech (intolerance) that will confer an open-ended license to silence voices and points of view.

This isn’t about Twitter trying to do better by freedom of thought or expression. It’s about throwing an “academic” cloak over partisan censorship.

The really great part, from my perspective, is bootstrapping in the incivility. You have to admit it’s genius. Twitter mobs screaming filthy words at people and spraying spittle all over them to brand them as “racist” and “fascist”? Not a big deal. Can be held in many instances to promote healthy dialogue, or at least to be legitimately necessary as an attention-getter, or method of “marking positions in heated discussions.”

But “political intolerance,” which is every bit as much in the eye of the beholder as incivility (indeed, I would argue is more so)? The definition of political intolerance on various issues is, in fact, precisely what people disagree on. Assuming what it means in advance, and making it an unappealable criterion for conversational ostracism, does the opposite of promote public conversational health.

Yet Rossini argues for prohibiting the disagreement by branding disagreement itself as “intolerance” and “moral disrespect,” while permitting “disrespectful expressions, personal attacks, bad manners, pejorative speech, vulgarity and rude remarks.”

From her paper (more extensive quote from pp.10-11; the bolded passage indicates exactly where political partisans don’t agree on what constitutes the “negative attitudes,” or how they are to be defined):

I propose a distinction between political intolerance – comprising behaviors that are inherently threatening to democratic pluralism – and incivility – the use of disrespectful expressions, personal attacks, bad manners, pejorative speech, vulgarity and rude remarks. Based on prior studies (Herbst, 2010; Mutz, 2016; Shea & Sproveri, 2012), I conceptualize incivility as a context-dependent feature of discourse that may convey a rude, negative or disrespectful tone towards people, groups and discussion topics (Coe et al., 2014). Conversely, political intolerance is defined as attacks on individual liberties and rights, demonstrations of negative attitudes towards certain groups defined in terms of attributes such as race, sex, gender or religion, xenophobia, the use of stereotypes that are harmful or demeaning towards individuals or groups, and incitement to violence or harm. Political intolerance threatens, or at least signals lack of, moral respect – a condition for individuals to be recognized as free and equal in a pluralist democracy (Habermas, 1998; Honneth, 1996).

The novelty of this approach lies in acknowledging that incivility might be used as a rhetorical asset to mark positions in heated discussions, as well as to grant attention to one’s perspective, especially when there is disagreement. In this sense, while the nature of online discussions – with reduced social and contextual cues, as well as weak or non-existent social ties (Hmielowski et al., 2014; Papacharissi, 2004; Rowe, 2015; Santana, 2014) – may facilitate the use of uncivil discourse, I believe these expressions are not necessarily incompatible with democratically desirable political talk online, nor they should prevent these discussions from having similar benefits as those often attributed to political conversation that is not uncivil. The same is not true for intolerant discourse, as it signals moral disrespect and profound disregard towards other people or groups, and as such is incompatible with, and has the potential to damage, normative values of democratic pluralism, freedom of expression and equality (Gibson, 1992; Hurwitz & Mondak, 2002).

Shutting down free communication on issues, while assigning “conversational” value to bad manners and vulgarity? Sounds like a recipe for putting Antifa in charge of our public conversational health.

We’ll see what the other academic partners of Twitter’s conversational-health initiative have to say. Given this beginning, it’s a little hard to be hopeful on that head.